Agent Trust vs AI Safety vs AI Governance: What Product Managers Must Understand

A simple, product-focused breakdown of AI Safety, AI Governance, and Agent Trust—helping Product Managers understand the differences, why they matter, and how they impact AI product adoption.

Priyanka

5/3/20263 min read

AI is moving beyond experimentation into real-world products. From copilots to autonomous agents, AI is now making decisions and taking actions, not just generating outputs.

As this shift happens, three terms show up everywhere:

👉 AI Safety

👉 AI Governance

👉 Agent Trust

They are often used interchangeably—but they are not the same.

For Product Managers, understanding the difference is critical. Why?

Because each one influences:

What you build

How you design it

How you measure success

And how customers adopt your product

Let’s break it down in a simple, practical way.

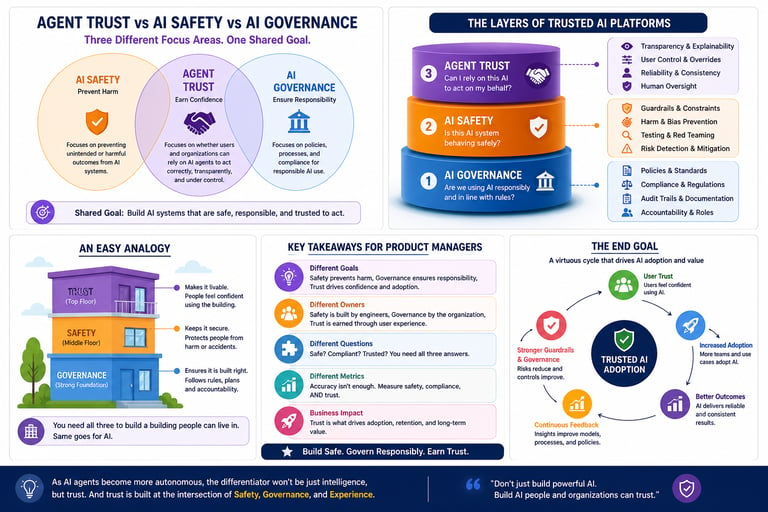

1. What is AI Safety?

AI Safety focuses on preventing harmful or unintended outcomes from AI systems.

It answers the question:

👉 “Is this AI system behaving safely?”

Simple examples:

Preventing toxic or biased outputs

Avoiding harmful recommendations

Ensuring the model doesn’t produce dangerous content

What PMs should care about:

Model guardrails (filters, constraints)

Testing for edge cases

Red-teaming and adversarial testing

Think of AI Safety as:

🛑 “Don’t let the AI do something harmful.”

2. What is AI Governance?

AI Governance is about policies, rules, and processes that ensure AI is used responsibly and in compliance with laws and standards.

It answers:

👉 “Are we using AI responsibly and in line with regulations?”

Simple examples:

Documenting how models are trained

Ensuring compliance with regulations

Keeping audit trails of decisions

Frameworks like NIST AI Risk Management Framework, EU AI Act and ISO 42001 emphasize governance practices such as accountability, transparency, and risk management.

What PMs should care about:

Auditability and traceability

Role-based access and approvals

Documentation and reporting features

Think of AI Governance as:

📜 “Are we following the right rules?”

3. What is Agent Trust?

Agent Trust is about whether users and organizations can confidently rely on AI systems to act correctly, transparently, and under control.

It answers:

👉 “Can I trust this AI to act on my behalf?”

Simple examples:

Can I understand why the AI took an action?

Can I control or stop it if needed?

Does it behave consistently over time?

What PMs should care about:

Visibility into decisions (explainability)

Control mechanisms (approvals, limits, overrides)

Reliability and consistency

Think of Agent Trust as:

🤝 “Would I trust this AI to do this for me?”

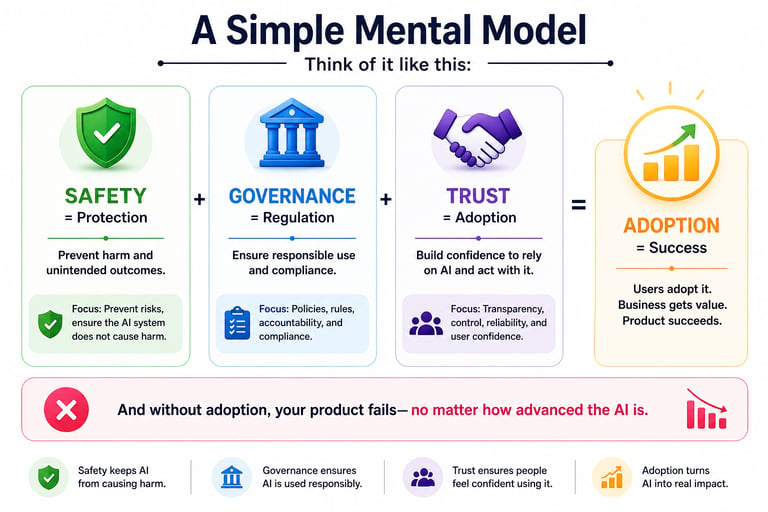

Let’s simplify the relationship:

Key insight for PMs:

Safety is technical (models and behavior)

Governance is organizational (policies and compliance)

Trust is experiential (user confidence and adoption)

👉 You can have safety and governance—but still lack trust if users don’t feel in control.

Why This Matters for Product Managers

As a PM, your role is not just to build AI features—it’s to ensure they are adopted and relied upon.

Here’s where many products fail:

They are safe but not usable

They are compliant but not transparent

They are powerful but not trusted

Example:

An AI system may:

Be safe (no harmful outputs)

Be compliant (well documented)

But if:

Users don’t understand its decisions

Or can’t control its actions

👉 They won’t use it.

That’s a trust failure, not a safety or governance issue.

How PMs Should Think About It

1. Design for Safety (Foundation)

Add guardrails and constraints

Test edge cases

Prevent harmful outcomes

2. Build for Governance (Structure)

Enable audit trails

Support compliance reporting

Define roles and responsibilities

3. Deliver Trust (Outcome)

Provide visibility into decisions

Enable user control

Ensure consistent behavior

The Opportunity for Product Managers

This is where PMs can differentiate.

Most teams focus heavily on:

Models

Accuracy

Performance

But the real opportunity lies in:

👉 Designing AI systems that users actually trust

This means:

Building explainable experiences

Creating control points

Designing intuitive workflows

Measuring trust, not just accuracy

Final Thoughts

AI Safety, Governance, and Agent Trust are not competing ideas—they are layers of a complete AI product.

Safety ensures AI doesn’t harm

Governance ensures AI is responsible

Trust ensures AI is used

As AI becomes more autonomous, trust becomes the deciding factor.

Because at the end of the day, the question isn’t:

👉 “Is this AI powerful?”

It’s:

👉 “Would you trust it to act for you?”